February 25, 2025

What is an LVM?

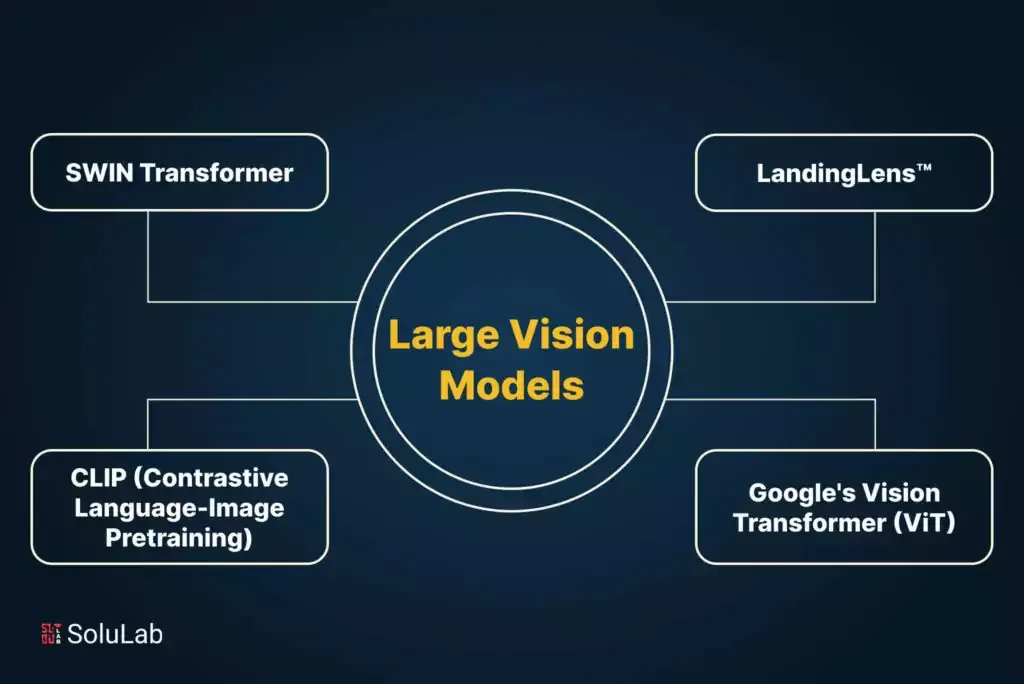

Large Language Models (LLMs) are now well-known thanks to ChatGPT’s phenomenal success. They can analyze and comprehend vast volumes of sophisticated data, including text, images, and other types of information, making them unique. These models analyze and learn from enormous volumes of data using deep learning techniques, which enables them to spot patterns, forecast the future, and produce high-quality results. Large language models’ capacity to build natural language material that closely mimics human writing is one of their main features. These models are helpful for applications like language translation, content generation, and chatbots since they can generate logical and persuasive written passages on various subjects. Similarly, LVMs can recognize and classify images with astounding precision. They can produce in-depth descriptions of what they see and recognize items, scenes, and even emotions that are depicted in photographs. These models’ distinctive abilities have several real-world applications in areas like artificial intelligence, computer vision, and natural language processing, and they have the potential to alter how we use technology and handle data fundamentally.

The applications of this are enormous. Imagine a computer looking into human tissues and counting the exact number of cancer cells. LVMs combined with the ever-evolving LLMs can count, classify, and predict the stage and rate of progression.

And like LLMs, LVMs can be trained through a technique called Visual Prompting. In this technique, a user prompts the model to produce the desired output by suggesting a pattern or image that the model has been trained to recognize and respond to in a certain way.

The Internet’s Blind Spot: Specialized Images and the LVM Gap

Unlike LLMs, which can generalize well from internet text to proprietary documents due to their inherent similarities, LVMs encounter a significant obstacle. Generic LVMs trained on internet images, filled with snapshots of everyday life, often struggle with images from specialized domains like manufacturing, healthcare, or agriculture. These domains utilize images vastly different from the typical content found online, rendering generic models ill-equipped to extract meaningful information.

Domain-Specific LVMs: Tailored Solutions for Enhanced Performance

This limitation underscores the crucial role of domain-specific LVMs. These models are specifically adapted to handle the unique characteristics of images within a particular field. Landing AI, a pioneer in this space, takes an innovative approach. By leveraging approximately 100,000 unlabeled images specific to a domain, they fine-tune a generic LVM, dramatically enhancing its performance. This approach translates to a significant reduction in the amount of labeled data needed to train the model, typically by 10–30%.

A New Dawn Across Industries: Unlocking the Potential of Domain-Specific LVMs

The implications of this development are far-reaching, impacting numerous industries:

- Manufacturing: LVMs can analyze production lines for defects, optimize processes, and automate quality control, leading to increased efficiency and product quality.

- Healthcare: LVMs can assist in medical imaging analysis, enabling faster and more accurate diagnoses, ultimately improving patient outcomes.

- Retail: LVMs can enhance product identification, optimize inventory management, and personalize customer experiences, driving sales and customer satisfaction.

- Agriculture: LVMs can monitor crop health, detect disease outbreaks, and optimize resource allocation, promoting sustainable agricultural practices.

Beyond improved performance, domain-specific LVMs offer several advantages:

- Reduced training costs: Utilizing unlabeled data significantly minimizes the need for expensive labeled data, making model development more cost-effective.

- Faster deployment: Faster training times allow for quicker deployment and implementation of LVMs in real-world applications, accelerating advancements.

- Improved accuracy: By understanding the nuances of specific image types, domain-specific models deliver significantly more accurate results.

Navigating the Challenges: Ethical Considerations and Data Bias

However, with this transformative technology, certain challenges arise:

- Ethical implications: The use of LVMs raises ethical concerns, particularly in areas like healthcare and manufacturing. Ensuring fairness, transparency, and accountability in their application is crucial.

- Data bias: Biases present in training data can be amplified by LVMs, leading to discriminatory outcomes. Mitigating bias through diverse datasets and responsible development practices is essential.

- Limited data availability: Access to large amounts of domain-specific data can be challenging for certain industries. Exploring innovative data collection and augmentation techniques is necessary.

A Vision for the Future: Advancing Image Processing with LVMs

Despite these challenges, the potential of domain-specific LVMs is undeniable. Landing AI’s pioneering approach serves as a catalyst for a future where LVMs unlock groundbreaking advancements across various fields. As research and development continue, we can expect to see even more innovative applications of this powerful technology, shaping the future of image processing and artificial intelligence. The LVM revolution is upon us, promising to redefine how we see and interact with the world around us.